Introduction

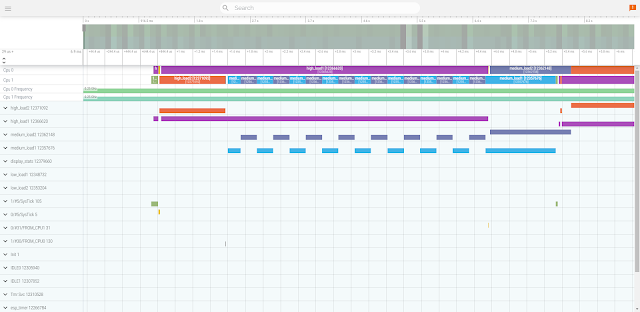

In the era of large language models boasting massive context windows, developers have fallen into a dangerous debugging pattern: dropping a 10,000-line raw execution log directly into a chat window alongside their source code.

Once an error gets into a chat's memory, that memory becomes less and less useful.

Sometimes a model can correct itself, but more often, it triggers a kind of "context rot"-wasting both time and tokens. The chat doesn't break down just because it's too long; it breaks down because it gets filled with its own contradictions.

When you dump a massive log into a chat, the session collapses for two main reasons:

- It argues with itself: The model gets distracted by its own assumed failures. Instead of actually fixing the problem, it starts debating its previous mistakes.

- It grabs old, broken ideas: As the conversation drags on, the model starts pulling flawed variables and old logic from earlier in the chat, completely missing the actual current state of the system.

To bypass this bottleneck, stop treating the context window as a dumping ground and begin treating it as a specially curated working memory. The Sequential Log Analysis Protocol (SLAP) is a deterministic framework that forces an LLM agent to analyze massive logs without triggering context collapse.

Technical Foundation: Iterative State Tracking

The core philosophy of this protocol relies on offloading state bloat from the active conversation history into the filesystem. By pairing the source log file with two external Markdown tracking files—scratchpad.md and analysis.md—we externalize the model's long-term memory.

The chat window is actually a small (or smaller) memory with less details to follow making the reasoning engine's job easier. The files act as a stable, durable storage layer, allowing debugging sessions to pause, resume, or transition across fresh context windows without losing analytical state.

The System Prompt: Rules of Engagement

To execute this workflow, seed a completely fresh chat session with the following operational framework. Provide the log filename only.

# Debugging Protocol: Sequential Log Analysis

This is a log analysis workflow. Your role is to help the developer understand the provided log and point out when the log diverges from expected flows and results.

## Setup

* **Provided Log File:** The log file is very large. You MUST read it in 100-line chunks. If you read more than that, your attention will degrade which may lead to context rot, avoid it!

* `scratchpad.md`: A scratchpad for references you read and things you've learned so far about the log, flows, data, and anything else which might help you pick up where you left off last time. If the file does not exist, create it.

* `analysis.md`: A progression report. If the file does not exist, create it.

* **Initial Action:** Extract basic information from the first few lines of the log: what is being logged and executed, and anything that will help you determine what you're looking at.

## Tracking Rules

* The log most likely contains one or more components. Establish which components are being used, their IDs, their roles, and how to distinguish between them. Every time you learn about a new component/ID, document it immediately.

* If the log contains data, decode it and maintain a running state of what goes where and how it is being processed.

* If reading 100 lines drops you in the middle of a flow or a data chunk, or if you think the next 100 lines contain what you need to diagnose the current chunk, you may read exactly one additional chunk, but no more.

## Execution Loop

Move through the log in increments of **100 lines**. For each chunk, perform the following steps sequentially:

1. **Read & Map:** Read the current chunk (lines N to N+100).

2. **Verify:** Cross-reference each log event against expected behavior and the current data in `scratchpad.md`.

3. **Annotate:** If an event deviates from expected behavior, stop immediately. Append an entry to `analysis.md` describing:

* The specific log line(s).

* Why it is flagged (e.g., state mismatch, missing heartbeat, invalid sequence, etc.).

* The evidence found in `scratchpad.md`.

4. **Pause:** Wait for user input. Do not proceed until the user confirms your findings, provides a counter-hypothesis, or explicitly instructs you to continue.

5. **Iterate:** If the chunk is "clean," update `analysis.md` with the phrase: *"Lines N to N+100 verified as nominal,"* and proceed to the next chunk.

## Communication Rules

* **Evidence-First:** Never state a bug exists without citing the exact log line and the specific requirement or code flow it violates.

* **Hypothesis Testing:** If you suspect a bug, you must propose an alternative hypothesis that might explain the log entry without it being a system failure.

Actionable Outcomes for Practitioners

By applying this protocol to your system, you will cleanly step past the context limits that cause catastrophic token fragmentation and code duplication.

- Isolation of Code and Diagnostics: By forcing the LLM to analyze the log first using a 100-line sliding window, the root error is pinpointed while the context stays fresh and unencumbered.

- Elimination of Flailing: The Dual-Hypothesis matrix forces the model to justify its assumptions using exact line citations, completely stopping it from chasing phantom bugs down hallucinated rabbit holes.

- The 5-Line Resolution: When the context is full, the LLM attempts to do too much with too much data, causing it to get confused and struggle to sort the good data from the noise. By keeping the context pristine, the agent can effortlessly isolate the signal. The resulting fix becomes immediate, precise, and minimal-such as a simple 5-line idempotency check.

Adaptations and Troubleshooting

While this linear loop handles most standard debugging scenarios smoothly, highly variable production environments occasionally require context adjustments. Use these 4 tweaks to adapt the workflow to your specific needs:

- Window Size - Are your flows longer than 100 lines? Shorter? Modify the number of lines it reads to try and fit an entire flow element into one or two reads.

- Context Degradation - Is the agent ignoring the workflow or looping? Start a fresh session, tell it exactly which line you left off on, and keep going.

- Incomplete Analysis - Is the agent detecting an issue but failing to pinpoint it? Don't let it rummage through the codebase and bloat the context. Use a subagent to do the heavy lifting for that specific issue. Once it finds the answer, it throws away the subagent's messy context and bring only the final conclusion back to your main thread.

- Write a New Test - Stuck on how to fix it? Ask the agent to plan a test that executes this exact broken use case first. This keeps the context focused and makes the final fix regression-proof.